Скачать с ютуб Accelerate Transformer inference on CPU with Optimum and ONNX в хорошем качестве

Скачать бесплатно и смотреть ютуб-видео без блокировок Accelerate Transformer inference on CPU with Optimum and ONNX в качестве 4к (2к / 1080p)

У нас вы можете посмотреть бесплатно Accelerate Transformer inference on CPU with Optimum and ONNX или скачать в максимальном доступном качестве, которое было загружено на ютуб. Для скачивания выберите вариант из формы ниже:

Загрузить музыку / рингтон Accelerate Transformer inference on CPU with Optimum and ONNX в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса savevideohd.ru

Accelerate Transformer inference on CPU with Optimum and ONNX

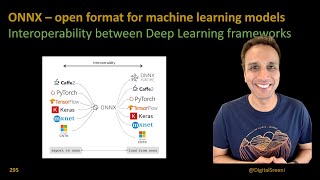

In this video, I show you how to accelerate Transformer inference with Optimum, an open source library by Hugging Face, and ONNX. I start from a DistilBERT model fine-tuned for text classification, export it to ONNX format, then optimize it, and finally quantize it. Running benchmarks on an AWS c6i instance (Intel Ice Lake architecture), we speed up the original model more than 2.5x and divide its size by two, with just a few lines of simple Python code and without any accuracy drop! ⭐️⭐️⭐️ Don't forget to subscribe to be notified of future videos ⭐️⭐️⭐️ ⭐️⭐️⭐️ Want to buy me a coffee? I can always use more :) https://www.buymeacoffee.com/julsimon ⭐️⭐️⭐️ Optimum: https://github.com/huggingface/optimum Optimum docs: https://huggingface.co/docs/optimum/o... ONNX: https://onnx.ai/ Original model: https://huggingface.co/juliensimon/di... Code: https://gitlab.com/juliensimon/huggin...

![[CppDay20] Interoperable AI: ONNX & ONNXRuntime in C++ (M. Arena, M.Verasani)](https://i.ytimg.com/vi/exsgNLf-MyY/mqdefault.jpg)