Скачать с ютуб KAN: Kolmogorov-Arnold Networks в хорошем качестве

Скачать бесплатно и смотреть ютуб-видео без блокировок KAN: Kolmogorov-Arnold Networks в качестве 4к (2к / 1080p)

У нас вы можете посмотреть бесплатно KAN: Kolmogorov-Arnold Networks или скачать в максимальном доступном качестве, которое было загружено на ютуб. Для скачивания выберите вариант из формы ниже:

Загрузить музыку / рингтон KAN: Kolmogorov-Arnold Networks в формате MP3:

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса savevideohd.ru

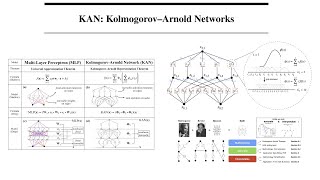

KAN: Kolmogorov-Arnold Networks

A Google Algorithms Seminar TechTalk, presented by Ziming Liu, 2024-06-04 ABSTRACT: Inspired by the Kolmogorov-Arnold representation theorem, we propose Kolmogorov-Arnold Networks (KANs) as promising alternatives to Multi-Layer Perceptrons (MLPs). While MLPs have fixed activation functions on nodes ("neurons"), KANs have learnable activation functions on edges ("weights"). KANs have no linear weights at all -- every weight parameter is replaced by a univariate function parametrized as a spline. We show that this seemingly simple change makes KANs outperform MLPs in terms of accuracy and interpretability. For accuracy, much smaller KANs can achieve comparable or better accuracy than much larger MLPs in data fitting and PDE solving. Theoretically and empirically, KANs possess faster neural scaling laws than MLPs. For interpretability, KANs can be intuitively visualized and can easily interact with human users. Through two examples in mathematics and physics, KANs are shown to be useful collaborators helping scientists (re)discover mathematical and physical laws. In summary, KANs are promising alternatives for MLPs, opening opportunities for further improving today's deep learning models which rely heavily on MLPs. ABOUT THE SPEAKER: Ziming Liu is a fourth-year PhD student at MIT & IAIFI, advised by Prof. Max Tegmark. His research interests lie in the intersection of AI and physics (science in general): Physics of AI: “AI as simple as physics” Physics for AI: “AI as natural as physics” AI for physics: “AI as powerful as physicists” He publishes papers both in top physics journals and AI conferences. He serves as a reviewer for Physcial Reviews, NeurIPS, ICLR, IEEE, etc. He co-organized the AI4Science workshops. His research have strong interdisciplinary nature, e.g., Kolmogorov-Arnold networks (Math for AI), Poisson Flow Generative Models (Physics for AI), Brain-inspired modular training (Neuroscience for AI), understanding Grokking (physics of AI), conservation laws and symmetries (AI for physics).